Role: Lead Product Designer

The problem

Most of the time providers spend at work isn't with patients. It's spent documenting.

Every clinical encounter generates hours of follow-on work: progress notes, structured fields for billing and coding, prior-authorization paperwork, care plans. The documentation isn't optional — it's how the system pays for care, tracks outcomes, and protects against liability — but the cost is real. Providers burn out. Notes get rushed or copy-pasted. Critical context gets lost between visits.

The promise of LLMs in this space was obvious. The hard part was making it useful without making it dangerous.

Approach

The design challenge wasn't "can AI write a clinical note?" — it's been able to do that for a while. The challenge was: can AI write a note that a clinician will trust, verify, and sign?

That distinction shaped everything. We designed the system around three principles:

- Provider-in-the-loop, always. The AI proposes; the clinician disposes. Every section of every note remains editable, and the provider sees what the AI generated and what context informed it.

- Structured data extracted from natural conversation. Rather than asking providers to fill out forms, the system listens to the visit and structures the relevant content — chief complaint, history of present illness, assessment, plan.

- Active prompting for diagnostic completeness. The system prompts patients (or clinicians) to fill gaps that would otherwise leave the note incomplete or the differential under-developed.

Solution

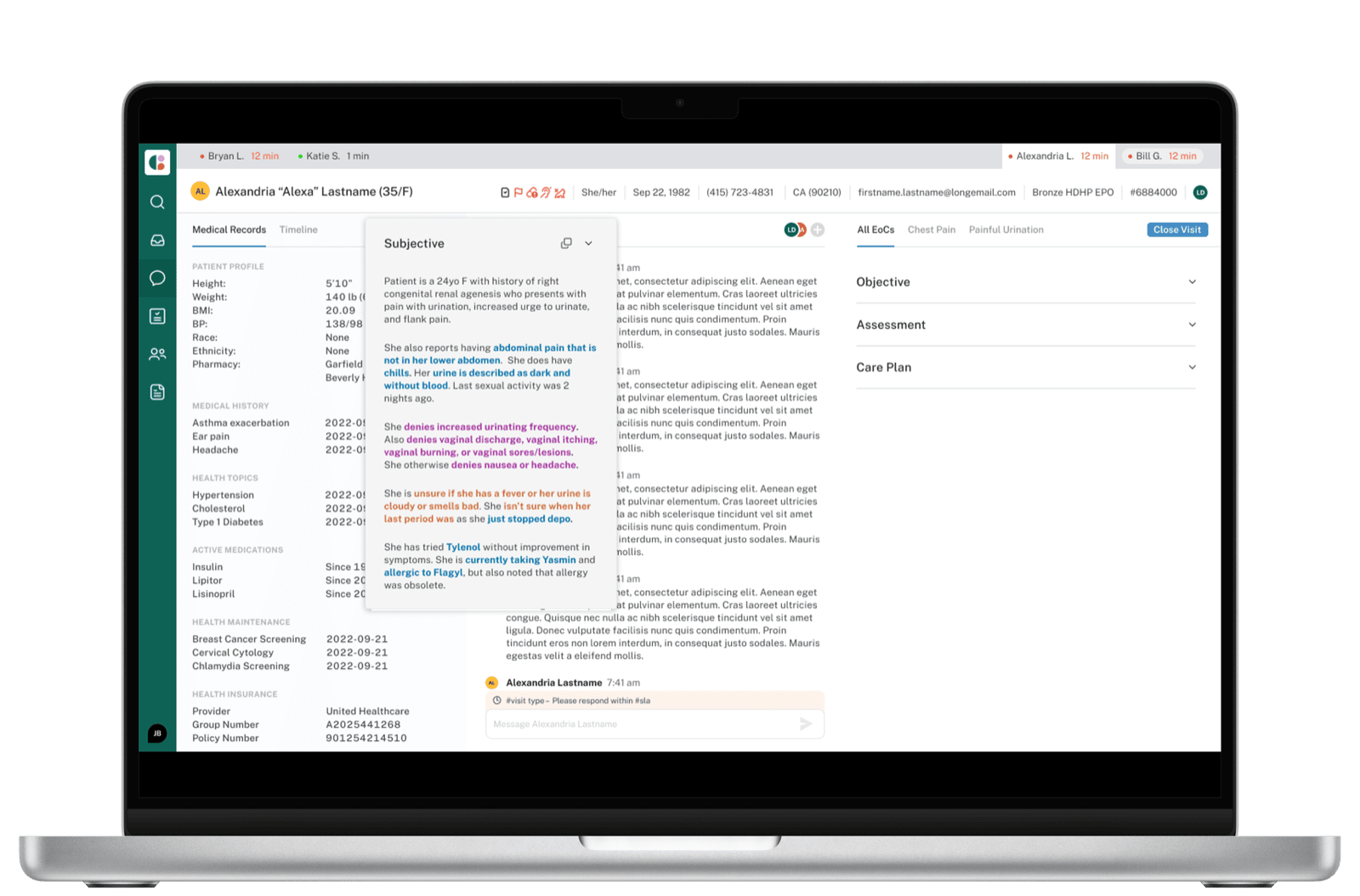

The clinician interface centers on a live, sectioned note that updates as the conversation progresses. The structure follows the standard SOAP format — Subjective, Objective, Assessment, Plan — but each section is populated by the AI from the source dialogue, with the source surfaced inline so verification is fast.

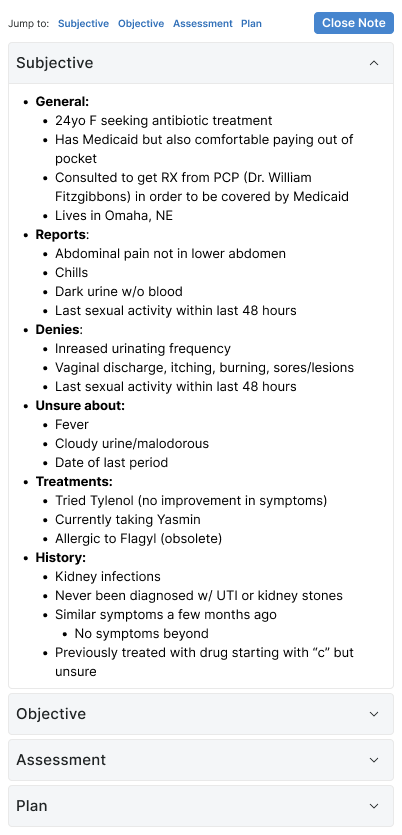

Subjective

The Subjective section is where AI extraction earns its keep. Patient histories arrive as long, meandering chat threads — providers used to spend significant time scanning for what's clinically relevant. The system structures the dialogue into a hierarchy that mirrors how clinicians actually think about a history: General, Reports, Denies, Unsure about, Treatments, History.

This isn't just summarization — it's a re-organization that respects clinical reasoning. "Denies" and "Unsure about" are first-class categories because the absence of a symptom is often as informative as its presence.

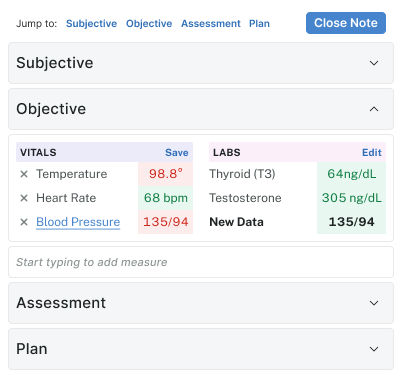

Objective

Objective is mostly numeric — vitals and labs. The design challenge here was visual scannability under fatigue. Color encoding (red for out-of-range, green for normal) draws the eye to what needs attention without making everything else feel like noise.

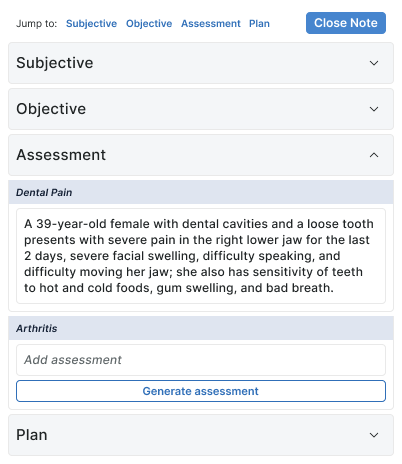

Assessment

Assessment is where the AI moves from extraction to synthesis. It generates a clinical narrative for each Episode of Care — but every assessment has a "Generate" affordance and stays editable, so the provider's voice and judgment remain on the line.

The two-state design (generated vs. blank-with-generate-button) was deliberate. Some EoCs warrant AI assistance; others are simple enough that the clinician would rather write a sentence than review a paragraph. The interface respects that choice.

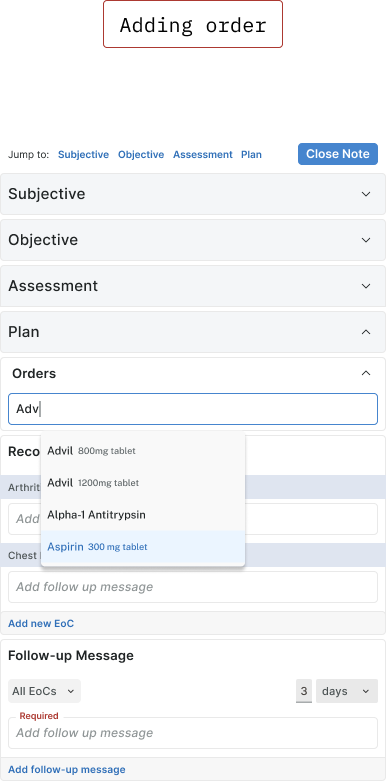

Plan

Plan is where the most consequential decisions get made — orders, prescriptions, follow-ups — and where the cost of error is highest. The Plan section is built around clinical safety rails: drug interaction warnings inline, required override reasons, pharmacy details, episode-of-care attribution, and follow-up message scheduling.

The layout looks dense because it has to be. Reducing prescriber cognitive load doesn't mean hiding information — it means making the most important information impossible to miss.

What this kind of work demands

The hardest design decisions in AI products aren't about visual interfaces. They're about trust calibration: how much confidence the system projects, how it surfaces uncertainty, how it makes its reasoning legible enough to be challenged. Healthcare amplifies all of those decisions — the cost of misplaced trust is high, and the cost of useless caution is also high.

The right answer was not "make AI invisible" or "make AI prominent." It was: make AI's contribution legible at exactly the moment a clinician is deciding whether to trust it.

This work is ongoing.